In today’s digital landscape, ensuring both functional accuracy and real-world user experience is critical—and this applies across both iOS and Android ecosystems. Real Device Testing (RDT) brings these dimensions together by enabling teams to validate how applications behave on actual devices, under real conditions, at scale.

Traditional testing approaches often treat functionality and system behavior as separate concerns. However, modern applications demand a unified approach—one that ensures your application not only works as expected but also delivers a seamless experience across diverse devices, operating systems, and environments.

Real Device Testing is the discipline that closes this gap. It ensures that every interaction, every screen, and every transaction is validated in the same conditions your users experience daily—on real hardware, real networks, and real usage patterns.

53%Users abandon if the load exceeds 3 seconds. (1)* | ~7%A 1-second delay in page load time can reduce conversions by ~7%. (2)* | ~$5.8BAnnual revenue lost to poor mobile performance. (3)* | 123%As load time increases from 1s to 10s, bounce probability increases by up to 123%. (4)* |

The gap no one talks about

Organizations that invest deeply in both still ship releases that degrade visibly on real mobile hardware. The reason is simple: neither discipline, in isolation, captures what users actually experience.

Consider a typical e-commerce checkout flow. Your test suite confirms the feature works. Your load tests confirm the backend processes concurrent orders with a strong API response time. But that API response is only the beginning of the user’s wait. On a real device, it is followed by JSON deserialization on a constrained CPU, layout inflation, image decoding on a shared GPU, JavaScript execution in a WebView, and final screen render — all of which vary dramatically across the device ecosystem.

On an emulator running on a developer’s workstation, those operations complete almost instantly. On a mid-tier Android device with modest processing power and shared RAM under background process pressure, the same operations can multiply perceived latency several times over — pushing users well past the patience threshold that triggers abandonment.

What RDT actually means

Real Device Testing involves testing mobile applications and websites on actual physical devices — not emulators or simulators. While emulators retain a legitimate role in catching functional regressions quickly in the CI loop, they cannot replicate the physical characteristics and constraints that determine how an application truly performs in users’ hands.

What emulators do well

Catching functional regressions quickly in CI, validating API contract conformance, running unit-level performance micro-benchmarks, and providing fast developer feedback without consuming real device capacity.

What only real devices reveal

Thermal throttling, memory pressure from the OS, genuine network variability, GPU rendering contention, battery drain patterns, cold start performance across hardware tiers, and the full user experience under real-world conditions.

RDT bridges the gap between the digital realm and the physical world — bridging functional accuracy on one side with performance at scale on the other. It is not a replacement for emulator-based testing; it is the layer that makes your entire testing strategy complete.

Performance dimensions only real devices expose

➣ GPU rendering performance and frame drops

Smooth 60fps requires the GPU to complete each frame within 16.6ms. Under concurrent background activity, real device GPUs experience contention that causes jank — visible stutters users perceive as “the app feeling slow” even when API response times are healthy. Emulators render through software virtualization layers that bear no resemblance to a real mobile GPU pipeline.

➣ Battery drain as a performance indicator

Excessive battery consumption signals inefficient CPU wake cycles, poorly batched network requests, or deferred background processing. Applications that drain battery fast are flagged by OS battery management systems that subsequently restrict background activity — degrading performance further. This feedback loop is unmeasurable on any emulator.

➣ Cold start and startup time variance

A cold start completing in 1.1 seconds on a flagship device may take 3.8 seconds on an entry-level device — directly crossing the threshold at which users perceive the app as “slow to open.” Storage I/O speed, available RAM, and competing background processes all affect cold start duration in ways only real hardware can expose.

➣ CPU thermal throttling under sustained load

Modern mobile processors aggressively reduce clock speeds when thermal limits are reached. During sustained sessions or high-frequency API polling, real chipsets can throttle to a fraction of peak clock speed — manifesting as frame rate drops and transaction timeouts that never appear in emulated environments running on a workstation’s unconstrained CPU.

➣ Memory pressure and background process killing

On 3G and 4G devices — still widely used in growth markets — Android’s Low Memory Killer daemon aggressively reclaims memory from background processes. This causes transaction failures and crash-to-background events entirely invisible to emulators running with generous host machine RAM allocations.

➣ Real network latency and packet loss profiles

Real-world mobile networks exhibit packet loss, jitter, sudden signal drops, and mid-session handoffs between cell towers or Wi-Fi access points. Artificial bandwidth throttling in emulators cannot reproduce these conditions. Testing performance under genuine network variability — including LTE-to-5G handoffs — requires physical devices on real networks.

How Cavisson Systems delivers RDT

At Cavisson, we built our performance engineering platform on the principle that application performance is a full-stack property — not a server-side attribute. RDT is deeply integrated with our capabilities, enabling organizations to instrument, execute, and analyze performance across infrastructure and client device tiers within a unified workflow.

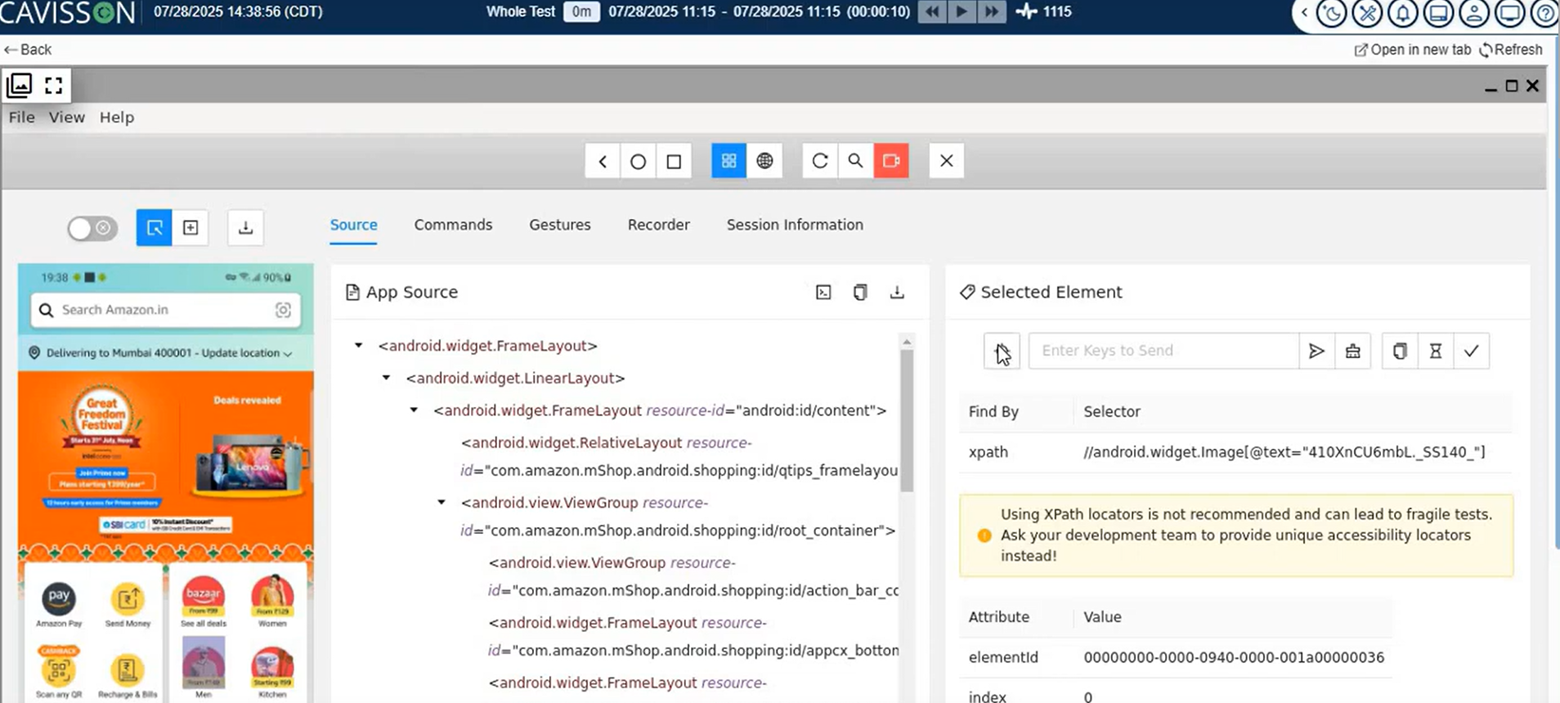

Click-Through Recording

Cavisson makes mobile test creation simple with intuitive click-through recording. Teams can capture real user journeys directly on devices while leveraging advanced inspection capabilities like XPath validation and attribute checks. This enables faster script creation while ensuring accuracy and flexibility for complex test scenarios.

Flexible Device Selection

Cavisson offers complete flexibility in device access. Teams can integrate with popular device farms like BrowserStack, LambdaTest, and AWS Device Farm, use Cavisson’s built-in device farm, or adopt a Bring Your Own Device (BYOD) approach. This ensures testing aligns with real user environments across regions and device types.

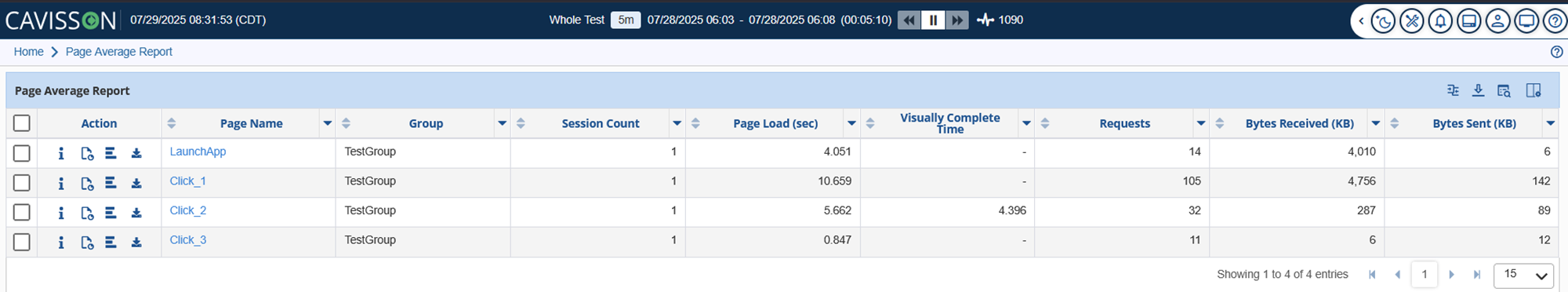

Meaningful Performance Results

By executing tests on real devices instead of emulators, Cavisson delivers performance insights that truly reflect real-world usage. Teams gain accurate visibility into responsiveness, speed, and behavior under actual hardware and network conditions, enabling more reliable performance optimization.

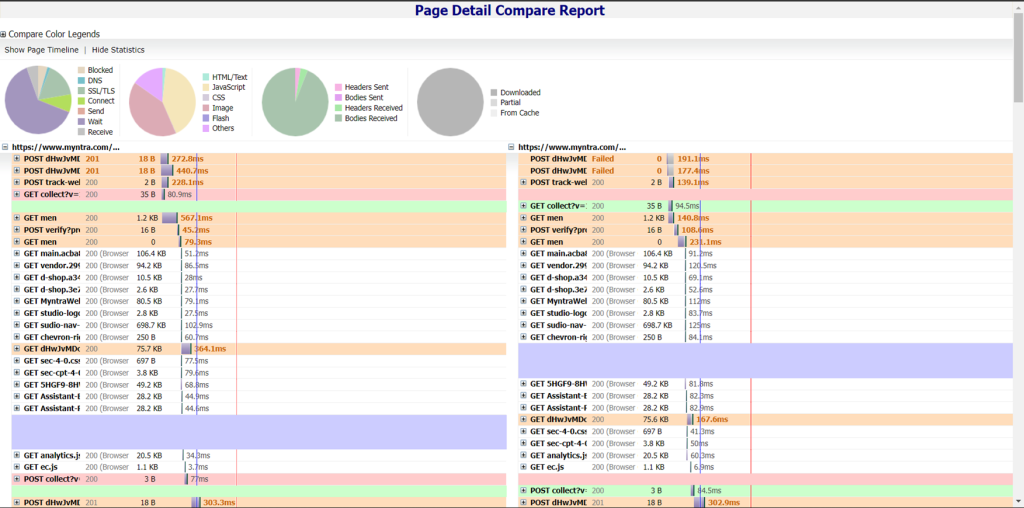

Timing aggregation & comparison

Cavisson aggregates performance data across devices, operating systems, and configurations, allowing teams to benchmark results and identify inconsistencies. This helps optimize application performance across the entire target device matrix, including both high-end and low-resource environments.

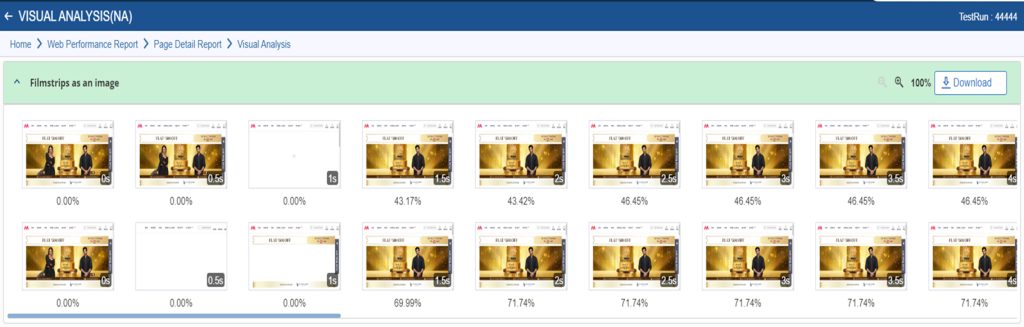

Rendering visual comparison

Cavisson enables visual validation across devices and browsers by comparing how applications render in different environments. This helps identify layout inconsistencies, UI distortions, and design gaps that can impact perceived user experience.

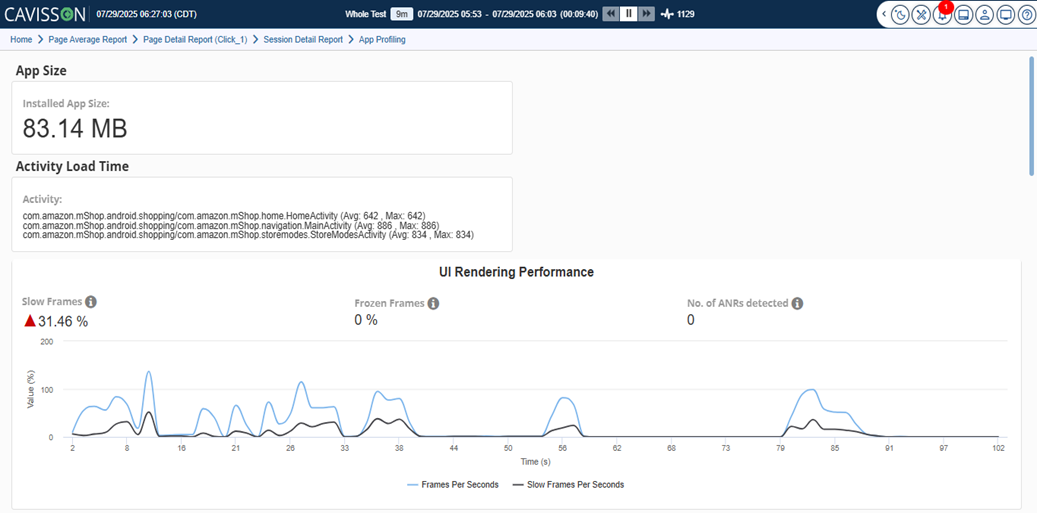

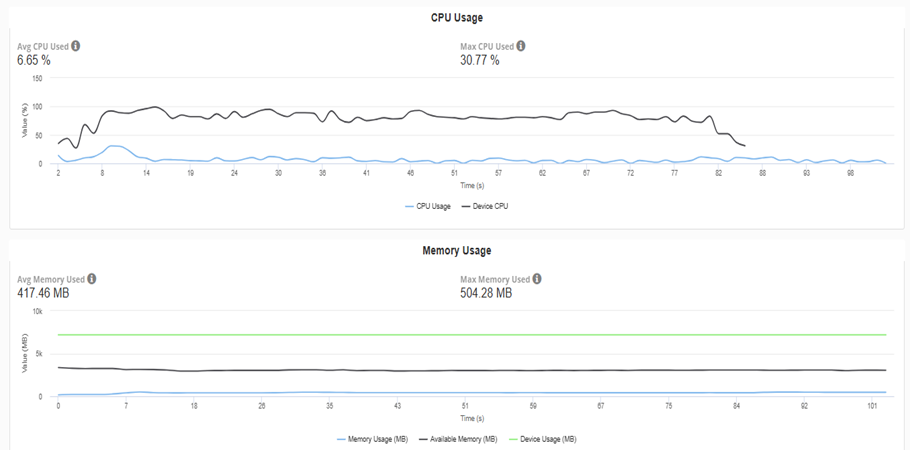

Device-Level Monitoring

During test execution, Cavisson captures deep device-level metrics such as CPU usage, memory consumption, battery impact, and network utilization. It also identifies slow frames, frozen frames, activity load times, and ANRs (Application Not Responding), providing a clear view of how applications perform on real devices.

Correlated Observability

Cavisson unifies server-side and device-side metrics on a shared timeline, enabling true end-to-end visibility. From the moment a user interacts with the screen to the final rendered output, every transaction is tracked and correlated with backend performance, turning raw data into actionable insights.

CI/CD Shift-Left Integration

Cavisson integrates Real Device Testing directly into CI/CD pipelines, allowing teams to run performance checks on every pull request. This ensures early detection of regressions and helps maintain performance baselines before code reaches production.

Real Desktop Browser Coverage

RDT with Cavisson extends beyond mobile to include real desktop browser testing across different operating systems and versions. This ensures consistent performance and user experience across all digital touchpoints.

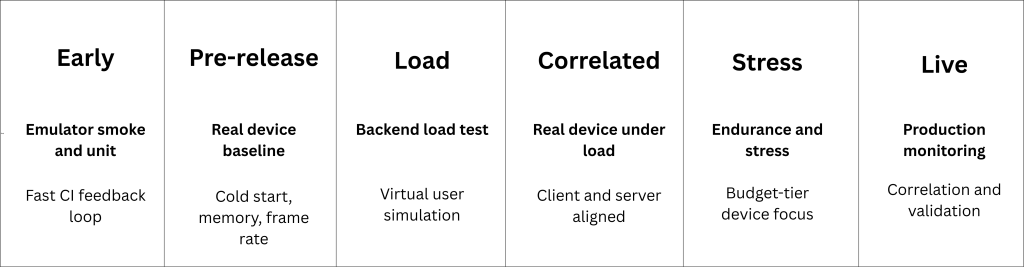

Integrating RDT into your delivery pipeline

The most effective performance engineering programs treat RDT not as a separate phase but as an integrated layer within their continuous testing architecture — present at every meaningful stage of delivery, from the first pull request to production monitoring.

when backend load tests run simultaneously with real-device client sessions — correlating both time-series datasets to identify the exact load threshold at which server latency begins to manifest as client-side degradation. This is the layer where functional accuracy meets performance at scale, and where most organizations still have a gap.

With vs Without Real Device Testing: The Difference Users Actually Feel

Without Real Device Testing (RDT) embedded in the delivery pipeline, teams validate success based on emulator results and backend metrics—where everything appears fast, stable, and production-ready. Features pass, APIs respond within SLA, and CI/CD pipelines turn green. Yet, once released, the real experience tells a different story: slow screen rendering on mid-tier devices, frame drops during peak usage, crashes under memory pressure, and inconsistent behavior across networks. The gap lies in what traditional testing cannot capture—the physical realities of real devices.

With RDT integrated across the pipeline, this gap disappears. Every release is validated not just for functional correctness and backend performance, but for real-world user experience. Teams gain visibility into device-level behavior—CPU throttling, memory constraints, GPU rendering, and network variability—while correlating it directly with backend performance. The result is a shift from reactive firefighting in production to proactive performance engineering during development. What passes testing is no longer a theoretical success—it is a true reflection of how the application performs in the hands of real users.

The future of RDT

As 5G networks mature and edge computing becomes mainstream, RDT will evolve to ensure applications can harness high-speed, low-latency networks and operate reliably in decentralized, edge-native architectures. Artificial intelligence will play a growing role in automating test selection, predicting failure modes, and delivering deeper diagnostic insights across increasingly complex device ecosystems.

Testing for extended reality (XR) applications will become a standard RDT use case. IoT device testing will expand the scope of what “real device” means. Security, energy efficiency, and accessibility will all become first-class performance concerns — measured not just on ideal hardware, but across the full spectrum of devices that real users carry.

Throughout all of this, tighter integration with DevOps and CI/CD pipelines will make performance validation as continuous and automated as any other quality signal in the delivery process. The organizations that embrace this shift will be the ones that stop discovering performance problems in production — and start preventing them at the source.

Bridging the gap — at every scale

Real Device Testing is not a supplemental activity or a late-stage quality check. It is the discipline that bridges functional accuracy and performance at scale — ensuring that every validated user flow, every load-tested backend, and every CI-approved pull request translates into a genuinely fast, reliable experience on the devices your users actually hold.

At Cavisson Systems, we have built our platform around this principle from the ground up. Contact us today to integrate RDT into your testing strategy and close the gap between what your tests show and what your users feel.

References

1*. https://www.catchpoint.com/statistics

2*. https://www.catchpoint.com/statistics

3*. https://www.conductor.com/academy/page-speed-resources/faq/amazon-page-speed-study

4*. https://www.catchpoint.com/statistics