In most organizations today, functional testing and performance testing exist as parallel universes. Different teams, different tools, different scripts, different schedules. Your QA team builds a comprehensive Selenium suite to validate user journeys, while your performance team recreates those same scenarios from scratch in LoadRunner or JMeter. Sound familiar?

This artificial separation isn’t just inefficient—it’s costing you time, money, and quality.

The Hidden Cost of Testing in Silos

Let’s look at a typical enterprise testing workflow:

Your functional testing team spends weeks building automated test scripts that validate critical user paths—login sequences, shopping cart workflows, complex multi-step transactions. These scripts capture real user behavior, edge cases, and business logic.

Then your performance team starts from zero. They manually recreate those same user journeys in an entirely different tool, translating business workflows into performance scripts. They’re essentially doing the same work twice, just with different objectives in mind.

The numbers tell the story:

- 40-60% duplication of effort across testing teams

- Weeks of additional development time for each release cycle

- Higher maintenance burden when application changes occur

- Increased risk of inconsistencies between functional and performance test scenarios

When you’re using a LoadRunner + Selenium combo (or similar pairing), you’re maintaining two completely separate codebases that test the same application. Every UI change means updating scripts in two places. Every new feature requires duplicate implementation work.

The Case for Unified Testing

Here’s a radical idea: what if your functional tests could become your performance tests?

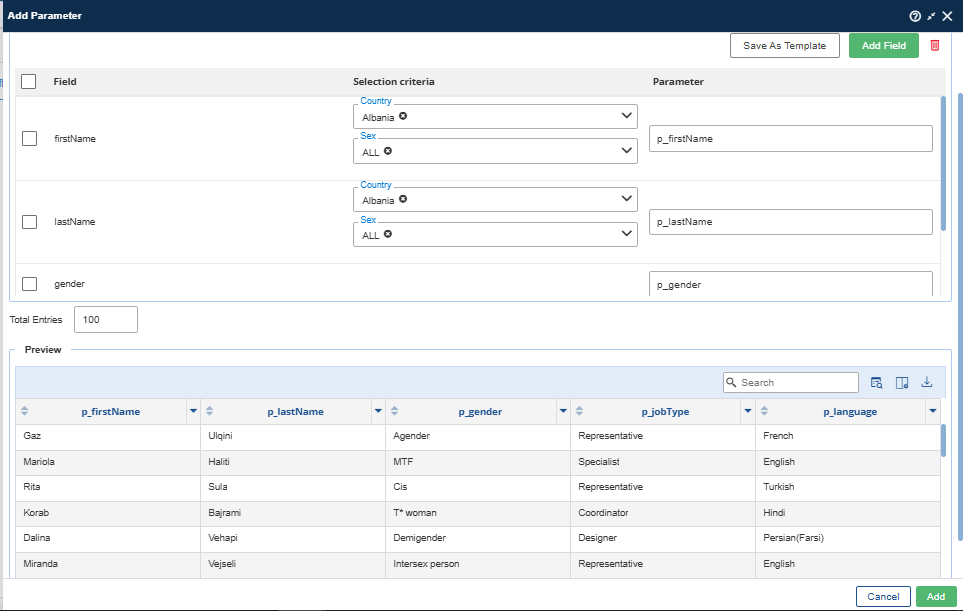

Modern testing platforms enable exactly this convergence. By using a unified scripting approach, you can:

Reuse test cases between functional and performance testing. Write once, use for both validation and load generation. The same test script that verifies your checkout process works correctly can be executed 1,000 times simultaneously to validate that it performs under load.

Reduce duplication by 40-60%. Eliminate redundant script development and maintenance. When business logic changes, update one script instead of two. When new features launch, create one test asset that serves multiple purposes.

Maintain consistency across testing types. Your performance tests represent the exact same user journeys that your functional tests validate. No more discrepancies between what QA tested and what performance testing measured.

Accelerate release cycles. With a single set of test assets, you can run functional regression and performance baselines in parallel, cutting overall testing time significantly.

What Unified Testing Looks Like in Practice

Consider a real-world example: an e-commerce company preparing for their annual Black Friday sale.

Traditional approach:

- QA team builds Selenium scripts for checkout, search, and account management

- The performance team recreates these flows in LoadRunner

- Total development time: 6-8 weeks

- Maintenance for each release: Both teams update their separate scripts

Unified approach:

- Single team builds reusable test scripts

- Same scripts execute for functional validation (single user) and performance testing (thousands of concurrent users)

- Total development time: 3-4 weeks

- Maintenance: Update once, benefit everywhere

The unified approach doesn’t just save time—it improves test coverage. Because creating new test scenarios doesn’t require duplicate work, teams can afford to test more user journeys, more edge cases, more thoroughly.

Beyond Tool Consolidation

This isn’t just about using one tool instead of two. It’s about fundamentally rethinking how we approach quality assurance.

When functional and performance testing share the same foundation:

- Developers can contribute to both functional and performance test automation without learning multiple frameworks

- DevOps can integrate all testing types into CI/CD pipelines more seamlessly

- Business stakeholders get faster feedback on both correctness and scalability

- Test teams can focus on expanding coverage rather than duplicating effort

Making the Shift

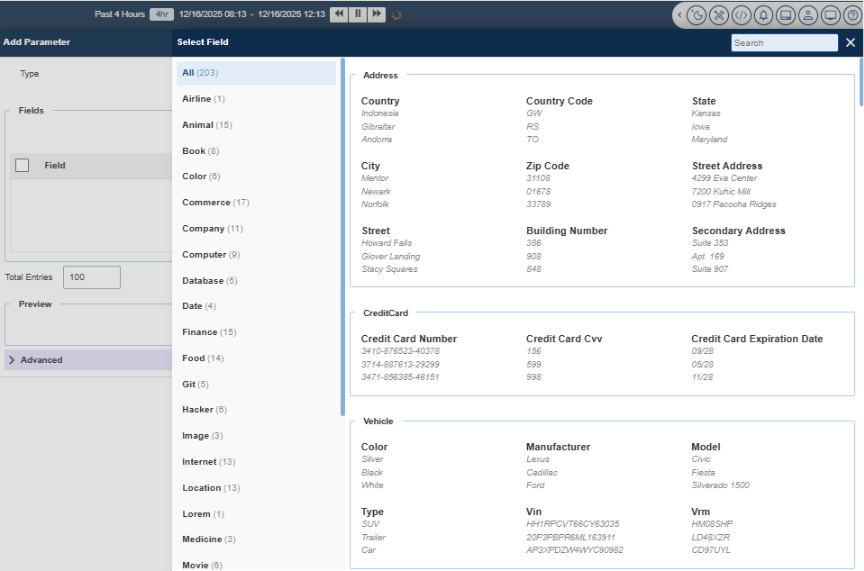

Moving from siloed to unified testing requires both technical capability and organizational change. Look for platforms that support:

- Protocol-level and browser-level test creation from a single interface

- Script reusability across functional and non-functional testing

- Integrated reporting that correlates functional failures with performance degradation

- Developer-friendly scripting that both QA and performance engineers can contribute to

The transition pays dividends quickly. Teams typically see:

- Reduction in test development time

- Faster time-to-market for new features

- Better correlation between functional bugs and performance issues

- Higher quality releases with more comprehensive test coverage

The Bottom Line

In today’s fast-paced development environment, you can’t afford to test everything twice. The artificial wall between functional and performance testing creates inefficiency, inconsistency, and delays.

By breaking down these silos and embracing unified testing approaches, organizations can deliver higher-quality software faster with less effort. Your functional tests and performance tests validate the same application against the same user journeys. They shouldn’t live in separate worlds.

It’s time to bring them together.

Cavisson Systems provides unified testing platforms that enable teams to reuse test assets across functional, performance, and monitoring use cases. Learn how leading enterprises are reducing testing overhead while improving quality.